Process Large Sequence Efficiently

You are given a number n and need to produce the squares of the values from 0 up to n - 1.

The test code shows that the function is expected to return something iterable, because the judge calls list(process_large_data(n)). That means this problem is not just about getting the right values. It is also about producing them in a memory-friendly way, using Python generator style instead of building a big intermediate list unless you really need one.

For example, if n = 3, the values are 0, 1, 2, so the output sequence should be [0, 1, 4]. If n = 1, the answer is [0]. If n = 0, the answer is an empty sequence.

So the task is to generate squared values lazily for the range 0 through n - 1, while keeping the implementation efficient for large input sizes.

Example Input & Output

Efficient processing of values 0, 1, 2.

Single value processed.

Empty sequence yields empty results.

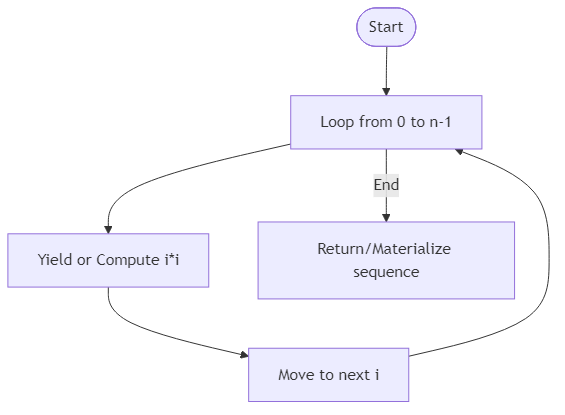

Algorithm Flow

Solution Approach

The main idea here is to use a generator expression or generator function so the values are produced one at a time instead of stored all at once in memory. That matters because the problem title is specifically about processing large sequences efficiently.

If we created a full list immediately, then an input like a very large n would allocate space for every squared value at once. Sometimes that is fine, but it is not the most memory-conscious approach. A generator solves that by yielding values only when the caller asks for them.

The simplest solution is:

Here, range(items) gives the values 0 through items - 1. The generator expression applies the transformation i * i to each one. Nothing is fully materialized yet. The caller can iterate through the results and consume them as needed.

This matches the test harness nicely, because the tests convert the result to a list with list(process_large_data(n)) only at comparison time. In other words, the generator stays lazy until the outside code actually asks for all values.

You could also write this as a generator function using yield, such as a loop that does for i in range(items): yield i * i. That version is equally valid and may feel clearer to readers who are still learning generators. The generator expression is just the shorter form.

The key lesson is why laziness matters. For a large sequence, we often want to process elements one by one instead of storing the entire transformed output in memory before anyone uses it. Even though the tests turn the result into a list at the end, the function itself still demonstrates the correct memory-efficient pattern.

The runtime is O(n) because every value still has to be generated once. The memory advantage is that the generator object itself uses only O(1) extra space aside from the caller's eventual consumption of the produced values.

Best Answers

def process_large_data(items):

for x in range(items):

yield x * xComments (0)

Join the Discussion

Share your thoughts, ask questions, or help others with this Challenge.